Here's a conversation happening in boardrooms everywhere:

"We need to implement AI before it replaces us all."

The fear is real. The understanding? Often completely wrong.

When most people say "AI," they're actually thinking about AGI—a hypothetical superintelligence that can do anything a human can do, and more. The problem is: AGI doesn't exist. And it might not for decades. Or ever.

What does exist is narrow AI—incredibly powerful tools designed for specific tasks. Understanding the difference isn't just academic. It's the key to making smart decisions about technology in your organization.

Let's break it down.

The Fundamental Difference

Narrow AI — What Exists Now

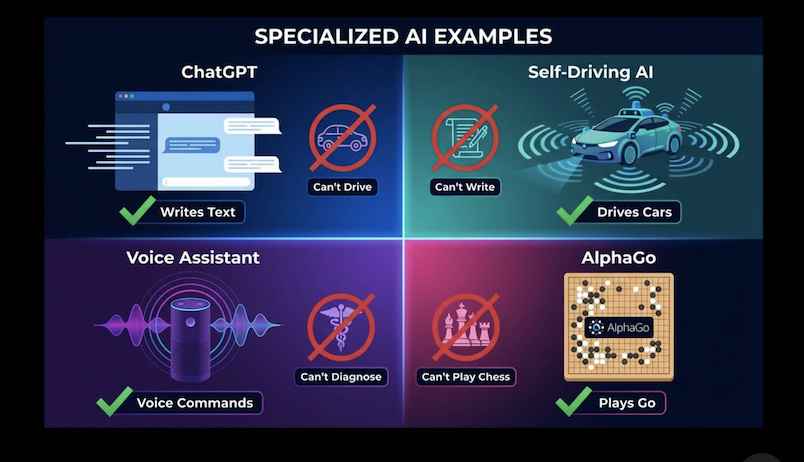

Designed for ONE specific task only.

- →ChatGPT: Writes text, can't drive

- →Tesla Autopilot: Drives, can't write essays

- →Siri: Voice commands, can't diagnose diseases

- →AlphaGo: Plays Go, can't play chess

Brilliant at one thing. Useless at everything else.

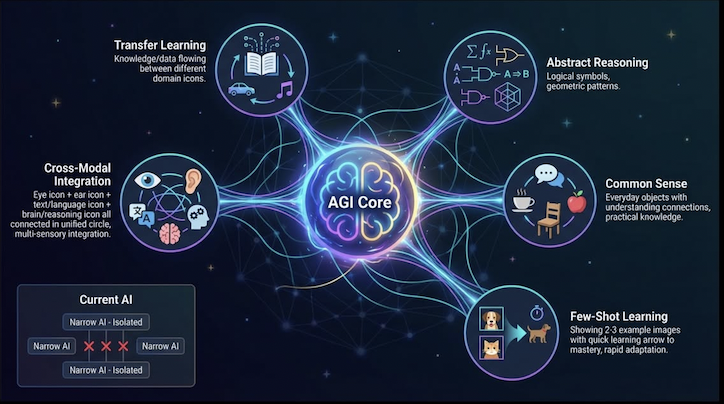

AGI — What Doesn't Exist Yet

Would understand, learn, and perform ANY task like humans.

- →Write, drive, diagnose, compose music

- →Learn new skills without retraining

- →Transfer knowledge across domains

- →One unified intelligence for all tasks

Status: DOESN'T EXIST. Purely theoretical.

Why This Confusion Is Costly

When executives hear "AI," many imagine AGI—a system that can reason, learn, and adapt to any situation. They invest expecting magic. What they get is a powerful but narrow tool that requires careful scoping. The mismatch leads to failed projects and wasted budgets.

How Current AI Actually Works

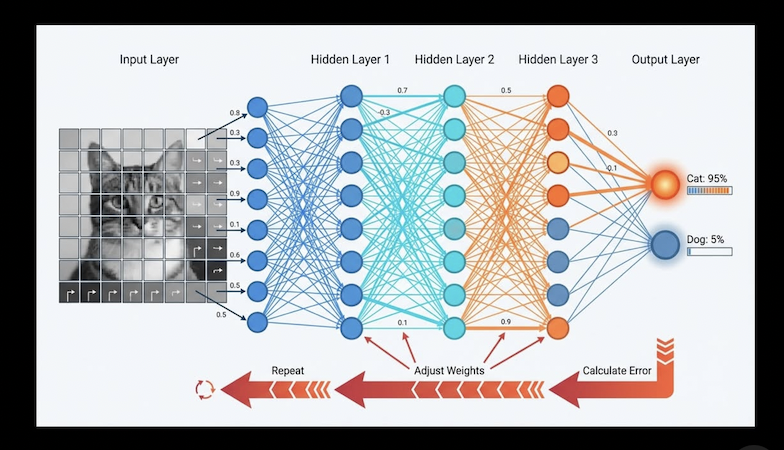

You don't need a computer science degree to understand AI. Here's the essential process that powers everything from ChatGPT to image recognition:

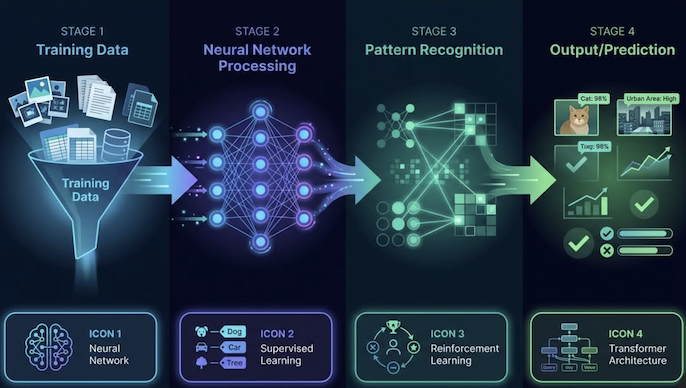

The 4-Stage Process:

Training Data

Feed millions of examples

Neural Network

Find patterns in the data

Pattern Recognition

Learn correlations

Output

Apply patterns to new data

Neural Networks: The Building Blocks

Neural networks are layers of mathematical functions that mimic (very roughly) how neurons in the brain connect. Data flows through layers, and each layer extracts increasingly complex patterns.

The critical limitation: Each network needs massive training for each specific task. A network trained to recognize cats can't suddenly write poetry—it would need to be completely retrained.

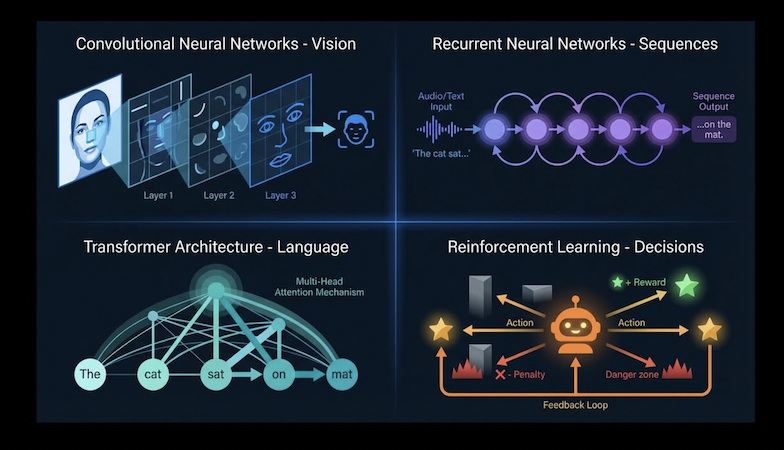

The Key AI Algorithms (Simplified)

Different problems require different types of AI. Here are the main categories you'll encounter:

CNNs (Convolutional Neural Networks)

Use: Image and video recognition

Example: Face recognition, self-driving vision

RNNs (Recurrent Neural Networks)

Use: Sequential data (text, speech)

Example: Translation, voice recognition

Transformers (GPT, BERT)

Use: Language processing

Example: ChatGPT, text generation

Reinforcement Learning

Use: Decision-making, game playing

Example: AlphaGo, robotics

The key insight: Each algorithm is specialized and task-specific. There is no "general purpose" AI algorithm that can do everything. This is the fundamental barrier to AGI.

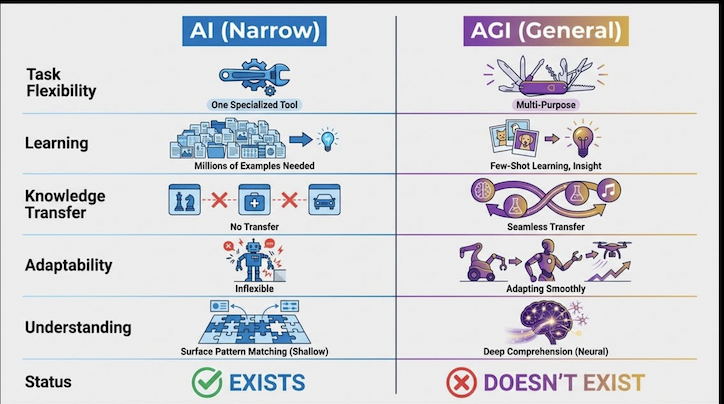

AI vs AGI: Side-by-Side Comparison

| Capability | AI (Narrow) | AGI (Theoretical) |

|---|---|---|

| Task Flexibility | One task only | Any task |

| Learning | Millions of examples needed | Few examples, generalizes instantly |

| Knowledge Transfer | Cannot transfer between tasks | Applies knowledge across domains |

| Adaptability | Breaks in novel situations | Adapts using reasoning |

| Understanding | Pattern matching (shallow) | True concept understanding |

| Status | EXISTS NOW | DOESN'T EXIST |

Why AGI Is So Hard to Build

If AI is so impressive, why can't we just make it "general"? The challenges are fundamental, not just engineering problems to solve:

Transfer Learning Problem

A chess AI can't play checkers without complete retraining. Current AI cannot apply knowledge from one domain to another—each task requires starting from scratch.

Catastrophic Forgetting

When AI learns new tasks, it erases knowledge of old ones. Humans can learn to cook without forgetting how to drive. AI cannot.

No Common Sense

AI doesn't understand that "water is wet" or "objects fall down." It has no grounded understanding of the physical world—only statistical patterns in data.

Massive Computing Needs

The human brain runs on about 20 watts. GPT-4 requires megawatts—and it's still not AGI. The energy and hardware requirements for true AGI may be prohibitive.

We Don't Understand Intelligence

We can't build what we don't understand. Neuroscience has not fully explained how human intelligence works. We're trying to replicate something we haven't decoded.

Expert Predictions on AGI Timeline:

2030-2040

Optimistic researchers

2050-2070

Most researchers

2100+/Never

Pessimistic view

Why This Matters (The Bottom Line)

Understanding the AI vs AGI distinction has real implications for how you think about technology:

Current AI (Now)

- →Powerful tools under human control

- →Automate specific tasks excellently

- →Can be regulated and contained

- →Already transforming industries

AGI (Future/Theoretical)

- →Would match human intelligence across ALL domains

- →Could self-improve recursively

- →Might develop own goals

- →Existential opportunity or risk

The Key Takeaway:

Current AI = extremely powerful tools.

AGI = a different species of intelligence.

We're nowhere close to AGI yet. Understanding this difference is crucial for discussing AI safety, regulation, and—most practically—making smart decisions about AI in your organization.

Navigating AI Can Be Confusing

The hype around AI makes it hard to separate what's real from what's science fiction. Vendors promise the moon. Headlines predict doom or salvation. Meanwhile, you're trying to figure out what AI actually means for your organization.

At Expert AI Labs, we help organizations cut through the noise. We don't sell magic—we help you understand where AI genuinely fits (and where it doesn't), what's realistic today, and how to move forward without getting burned by overpromises.

Whether you need help understanding the landscape, evaluating AI opportunities, or just want an honest conversation about what makes sense for your situation—we're happy to talk. No pressure, no pitch deck. Just a straightforward discussion.

Frequently Asked Questions

What is the difference between AI and AGI?

AI (Artificial Intelligence) refers to narrow, task-specific systems that exist today—like ChatGPT for writing or Tesla Autopilot for driving. Each is brilliant at one thing but useless at everything else. AGI (Artificial General Intelligence) would be a system that can perform ANY intellectual task a human can, transferring knowledge across domains. AGI doesn't exist yet and most researchers estimate it's decades away, if ever.

Is ChatGPT an example of AGI?

No, ChatGPT is narrow AI, not AGI. While ChatGPT is impressive at language tasks, it cannot drive a car, diagnose diseases, or learn new skills without retraining. It's a specialized tool trained on text data. AGI would be able to do all of these things and transfer knowledge between domains seamlessly.

When will AGI be achieved?

Expert predictions vary widely. Optimistic researchers suggest 2030-2040, while more conservative estimates point to 2050-2070. Some researchers believe AGI may never be achieved with current approaches. Major roadblocks include catastrophic forgetting, transfer learning limitations, and our incomplete understanding of human intelligence.

Why does understanding AI vs AGI matter for business?

Many AI projects fail because leaders expect AGI-like capabilities from narrow AI tools. Understanding the distinction helps set realistic expectations, choose appropriate use cases, and avoid investing in solutions that overpromise. Current AI is a powerful tool for specific tasks—not a magic solution.

What are the main types of AI algorithms today?

The main types include: CNNs for image recognition, RNNs for sequential data, Transformers (like GPT) for language processing, and Reinforcement Learning for decision-making. Each is specialized and task-specific—none can generalize across all domains.

Why is AGI so hard to build?

AGI faces fundamental challenges: AI can't transfer knowledge between domains; learning new tasks erases old knowledge (catastrophic forgetting); AI lacks common sense understanding; the computing requirements are enormous; and we don't fully understand human intelligence well enough to replicate it.